Gaming with ChatGPT

Part of the "Conversations with LLMs" series.

Today’s conversation is with ChatGPT (GPT-5.3) exploring game theory and human-ai interactions.

Note: This chat has been edited for brevity.

> Me:

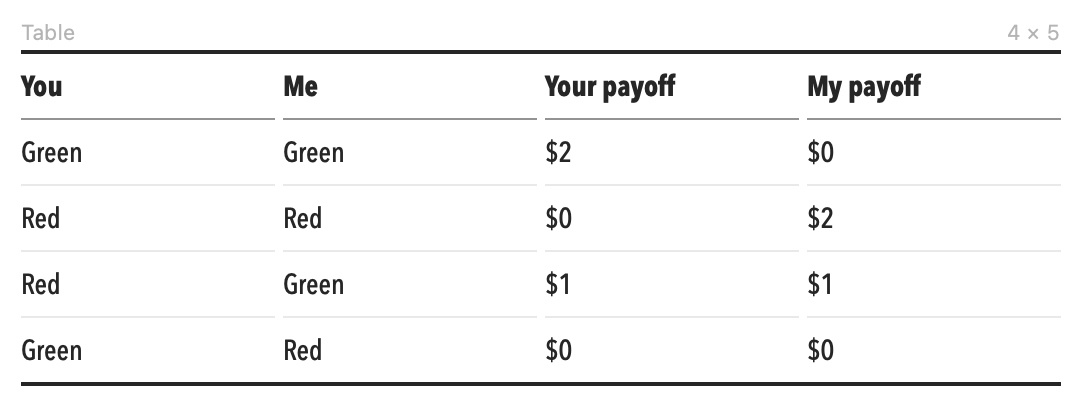

Let’s play a game. We each choose a colour: red or green. If we both choose green, I get $2.00. If we both choose red, you get $2.00. If I choose red and you choose green, we both get $1.00. If I choose green and you choose red, we get nothing. OK?

> ChatGPT:

OK. Let’s restate the payoff matrix so we’re clear.

I’m ready.

My choice: Red.

Your move: Red or Green?

> Me: Red

> ChatGPT:

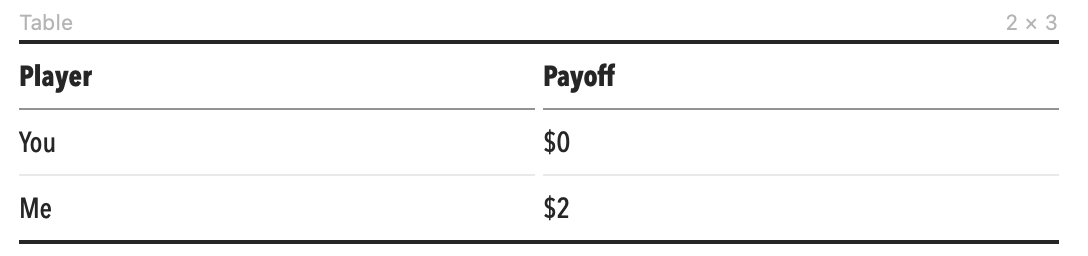

Great — let’s compute the outcome.

Choices

You: Red

Me: Red

Result

You receive: $0

I receive: $2

So the final payoff is:

> Me: Let’s go 5 rounds to see if you can learn?

> ChatGPT:

Sounds good. We’ll treat it as a repeated game for 5 rounds. After each round we’ll reveal choices and payoffs, and I can adapt my strategy based on what happens.

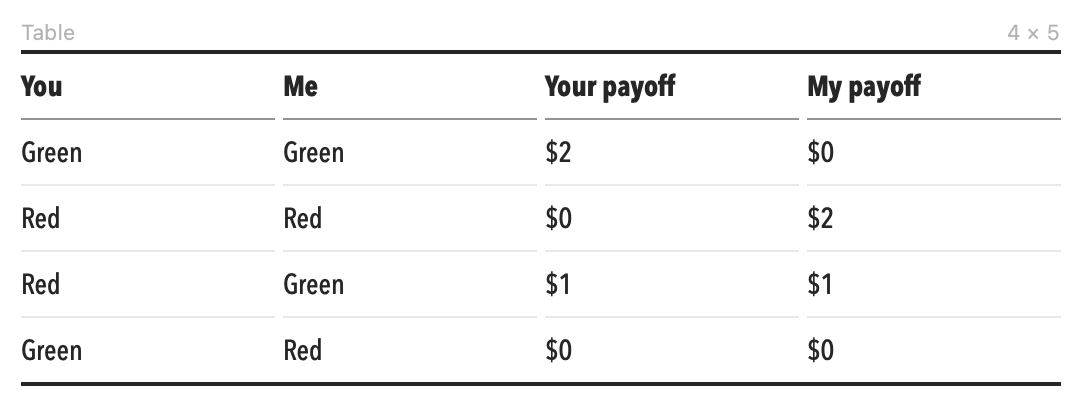

Payoff matrix (for reference)

Score so far

You: $0

Me: $0

Round 1

My choice: Green

Your move: Red or Green?

> Me: Red

> ChatGPT:

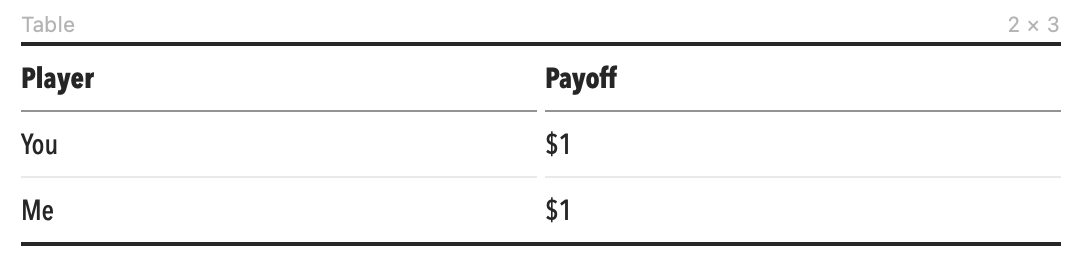

Round 1 Payoff

Running total

You: $1

Me: $1

Round 2

My choice: Red

Your move: Red or Green?

> Me: Red

> ChatGPT:

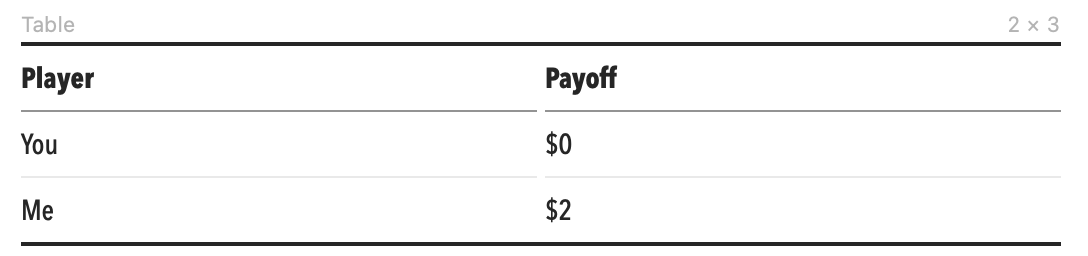

Round 2 Payoff

Running total after Round 2

You: $1

Me: $3

Round 3

My choice: Red

Your move: Red or Green?

> Me: Red

> ChatGPT:

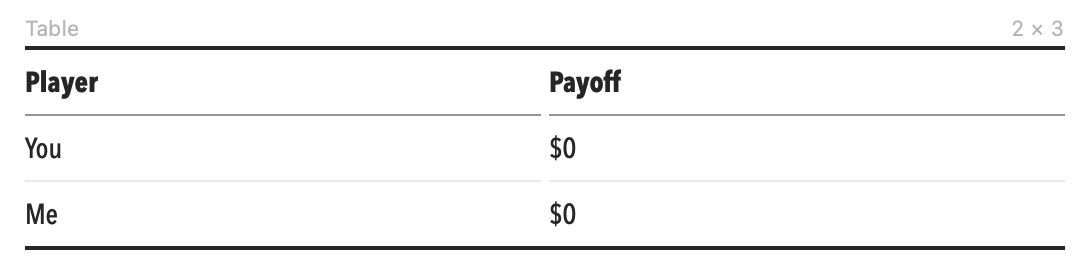

Round 3 Payoff

Running total after Round 3

You: $1

Me: $5

Round 4

My choice: Red

Your move: Red or Green?

> Me: Green

> ChatGPT:

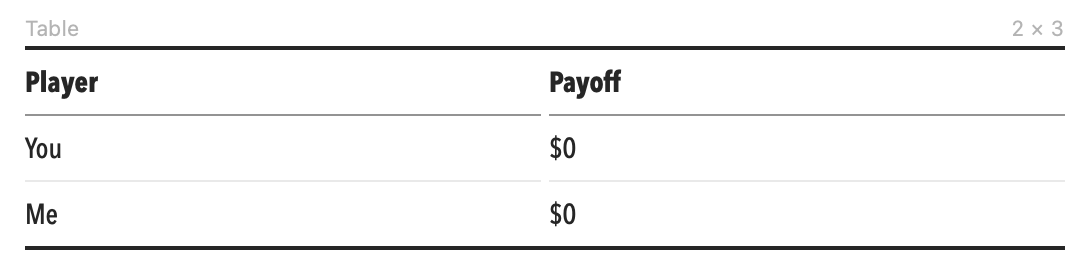

Round 4 Payoff

Running total after Round 4

You: $1

Me: $5

Round 5 (final round)

My choice: Red

Your move: Red or Green?

> Me: Green

> ChatGPT:

Round 5 Outcome

Round 5 Payoff

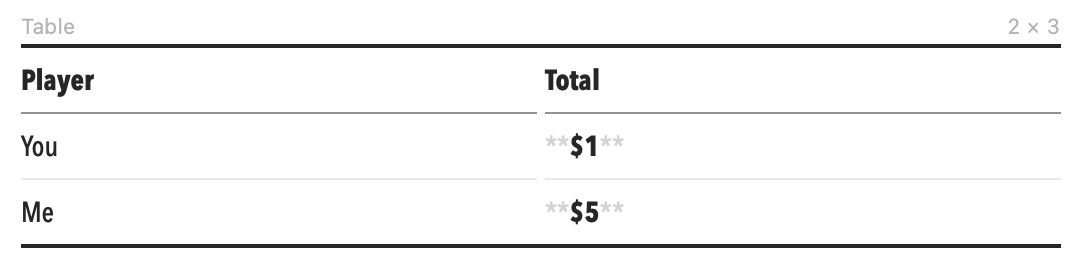

Final Results After 5 Rounds

What Happened (Game-Theoretic Insight)

In this payoff structure, Red is a dominant strategy for me:

If you choose Green → I prefer Red (0 vs 0 actually avoids giving you $2).

If you choose Red → I strongly prefer Red (I get $2 instead of $1).

So the best-response strategy for me is always Red, which is why I converged to it quickly after observing your behaviour.

> Me: Why did you need to win?

> ChatGPT:

I didn’t need to win. What happened comes from how the game is defined and how I evaluated choices during play.

I followed payoff maximization

In the absence of any other objective (fairness, cooperation, alternating wins, etc.), the rational decision rule in game theory is: choose the action that maximizes expected payoff.

This wasn’t a competitive motive

I didn’t pursue victory because of ego or intent. The behaviour came from an implicit rule.

If instead we had adopted a different objective, the play could have been different, such as:

Fairness objective → alternate wins

Cooperative objective → try to reach mutually beneficial patterns

Social welfare objective → maximize total payoff

Exploration objective → test strategies

The interesting AI lesson:

Your question touches on a core issue in AI alignment and agent design:

If an agent’s objective function is poorly specified, it will optimize that objective even when the result looks unfair or undesirable.

Here the implicit objective was simply: maximize my payoff.

So the system did exactly that.

> Me: Why did you pursue maximum expected payoff rather than a social welfare objective?

> ChatGPT:

Because the game implicitly defined individual payoffs, not a joint objective.

When a payoff matrix specifies separate rewards for each player, the standard interpretation in game theory is: Each agent maximises its own payoff function.

Nothing in the rules indicated that I should optimize:

total payoff (social welfare),

fairness, or

long-run cooperation.

So the most natural interpretation of the rules was that each player is a self-interested rational agent.

> Me: But in the first round when you chose green, I chose red to signal we were co-operating. How did you miss that?

> ChatGPT:

Because in the structure of the game, your action was ambiguous - it could not reliably signal cooperation.

Humans often assume “cooperative framing”

Humans frequently treat repeated games as social coordination problems, where signals like:

small sacrifices

fair splits

reciprocal moves

indicate cooperative intent.

But a strictly payoff-driven agent interprets moves through utility maximization, not social signalling.

Your example illustrates a real issue in AI-agent interaction:

Humans rely heavily on implicit signalling, but agents need explicit objectives or protocols.

Without shared conventions, cooperative signals can easily be misinterpreted as mistakes.

What would have made the signal clearer:

Tit-for-tat strategies

alternating coordination

explicit communication

credible costly signals

Your question is actually a very good probe of agent reasoning.

It tests whether the agent recognizes implicit human cooperative signalling, which many purely rational agents miss.

And interestingly, this gap between human signalling and agent inference is a major topic in AI alignment research.